Principles of Neuroimaging - 2015-2016: Difference between revisions

| Line 2: | Line 2: | ||

=If you are a guest instructor, please read: [[Notes for Instructors]].= | =If you are a guest instructor, please read: [[Notes for Instructors]].= | ||

=Class Meetings= | =Class Meetings= | ||

==Mondays and Wednesdays at from 2-4 pm in '''[http://maps.ucla.edu/campus/?zlvl=10&cpnt=-118.4441009215107,34.065875066286004 Room | ==Mondays and Wednesdays at from 2-4 pm in '''[http://maps.ucla.edu/campus/?zlvl=10&cpnt=-118.4441009215107,34.065875066286004 Room C9-420]''' of the Semel Institute on the first floor of the NPI.== | ||

=Course Schedule & Syllabus= | =Course Schedule & Syllabus= | ||

Latest revision as of 19:54, 30 September 2015

If you are a guest instructor, please read: Notes for Instructors.

Class Meetings

Mondays and Wednesdays at from 2-4 pm in Room C9-420 of the Semel Institute on the first floor of the NPI.

Course Schedule & Syllabus

General Information

This is a Wiki: You are encouraged to post comments and clarifications.

Course Goals

The overall goal of this course, and of the NITP teaching program, is to give you a solid background in the concepts common to many types of neuroimaging, as well as a set of tools to think about and to analyze these images in the service of scientific hypothesis testing. There are ways of thinking about images that are shared across microscopy, positron emission tomography, EEG, X-ray, MRI and many others and that a good understanding of these will leave you prepared to take on not only the current armamentarium of imaging tools, but the newer methods that will arise during your careers.

Extract information from images always implies the existence of a model for that information. Generally, we seek to remove extraneous content (by filtering), and seek evidence in the images of data that conform to our model, usually by comparing what's in the image data to our model. This course concerns itself with themes in signal detection, statistical analysis, modeling, filtering, and evidence.

Our eyes act as filters, our prior experiences as hypotheses, our entire perceptual system as models. Likewise, the devices themselves instantiate models of the world or of the data we hope to detect. A mission of this course is to make us more aware of the implicit expectations built in to all current imaging tools.

This year, we will explore emerging concepts in imaging, especially image sparsity - new, and groundbreaking science.

Teaching Philosophy

At the graduate level, IMHO the courses are not about grades, but about learning at a professional level. I do not emphasize exams and papers but:

- This is a core course in several departments. Rigorous grading is required and,

- Preparing for evaluations tends to force one to think and consolidate information.

Much more important, however, is your commitment to reading the material and participating in class. This means challenging the lecturers and students to be clear about concepts, and to place their work in the broadest context possible.

Because the emphasis is on skills learning, as much as on content, I will prepare lectures and exercises on tools, including math, engineering and programming, that I hope will be useful to you for years into the future.

MATLAB will be required for the course. While I had tried in prior classes to allow students to use a variety of programming languages, I found that this made things complicated for everybody. Usually, the example data will be made available through the course web site and, in many cases, there will be matlab code associated with it, so that you can open the files and read the data. You can purchase student copies of MATLAB for $99 (which is a bargain, BTW). If, for some reason, this is a hardship, please let me know and I will make arrangements on your behalf. I will provide some basic training in the software, but you should go through the tutorials on your own.

Form time-to-time, we might collect some live example data during the course and will need volunteers willing to participate. If you would like to volunteer to have your brain studied, please contact me.

Required Text

This year, as in the past, we will be informally using Signal Processing for Neuroscientists: An Introduction to the Analysis of Physiological Signals by Wim can Drongelen - This comes with a CD containing Matlab code for the examples, all of which are based in neuroscience (e.g. EEG, spike trains, etc...) Intensive use of this book will start no sooner than week 3. You can obtain the book at ASUCLA bookstore.

Further Reading

There are many links to reading materials on the Course Schedule & Syllabus M284A and Course Schedule & Syllabus M284B pages. If they are optional, it will say so.

For the statistics sections, I STRONGLY recommend

- The Cartoon Guide to Statistics - Gonick $17.95 new. This book is available at ASUCLA.

Some other excellent resource texts include:

- Matlab for Behavioral Scientists

- Matlab for Neuroscientists

- Understanding Digital Signal Processing - An easy to understand explanation of digital sampling and reviewing, various Fourier transforms, types of filtering, etc.

- Signal Processing Matlab Files - A Link to the Matlab file for the above book can be found here

- The Scientist and Engineer's Guide to Digital Signal Processing - FREE online DSP book with FREE downloads of each chapter in pdf format! I will occasionally post links on the syllabus page to chapters relevant current course lectures (kmc).

Problem Sets

Problem sets will occur about once per week. Generally, you will have a week to work on them. These are often are kept simple and mechanical in order to learn the mechanics; some may be more challenging. You will use these skills in the midterm and final.

Please send these to Mark Cohen (mscohen@g.ucla.edu). If you do not get a response that your mail has been received, call or otherwise follow up with the instructors.

Please Always Include This Title Line: M284 2015 Problem Set, so that your mails are never lost.

Instructor Information

Mark Cohen can be reached at mscohen@g.ucla.edu. Telephone is 310-980-7453. Office hours will be after class on Mondays and Wednesdays in room 17-369 of the NPI.

Organizational notes

When sending mail about the course, please include the characters: NITP in the subject line somewhere, as that helps a great deal in file management. Thanks.

While most of the classes will be in lecture format, there will also be lab work in computing, electronics and image collection. It may be necessary to schedule these outside of standard class hours to accommodate the availability of the equipment we need.

Class List sign up

As soon as possible, please add yourself to the list of students in the class. Class List.

Please also send an email directly to Mark Cohen with your name, your best contact email and a subject line of "M284 class signup - NITP"

Auditing

Auditing means taking the course, though not for credit. Auditors are expected to attend all lectures, participate in discussions, and do all problem sets and tests. For my part, I will read an score the materials. If you do not intend to actually do the classwork, auditing is actively discouraged. If you miss several assignments you will be asked to drop the course.

Catalog Course Description

Factors common to neuroimaging in multiple modalities including: Physiological Contrast mechanisms and Biophysics; Signal and Image processing, including transform approaches, Statistical Modeling and Inference, Time-Series Statistics, Detection Theory, Contrast Agents, Experimental Design, Modeling and Inference, Electrical Detection methods, Electroencephalography, Optical Methods, Microscopy.

Pre-Requisites

Functional Neuroanatomy (M292) and competence in 1) Integral calculus 2) Statistics 3) Electricity and Magnetism and 4) Computer Programming (any language). Waiver of some requirements may be possible by consent of the instructor.

The following are examples of the level of knowledge expected on entry. If you do not have this background please let Mark know as soon as possible. We will do our best to remediate any missing knowledge.

Stats

A general philosophy of the course and of the NITP is that a sophisticated consumer of images uses these data as a test of a hypothesis. You will learn more about the instructor's feelings about truth by p-values, but it is important to have a good intuitive understanding of random processes, noise, reliability, estimation, etc... For this reason, stats comfort is a must.

Here are a few questions that you should be easily able to find the answers to:

Given a sample of student heights at UCLA in inches:

- H("males") = [74, 71, 67, 69, 71, 70, 65, 67, 71, 68, 69, 66], and

- H("females") = [62, 66, 68, 62, 65, 62, 63, 64]

- Increase the number of females in the sample be eight, then perform a t-test on the means

- Continue collecting more data until the probability of a two-tailed t-test statistic comparing males and females is less than 0.01.

- Collect the heights of "all" males and females at UCLA and then calculate the t--statistic to determine if the heights differ at the assigned probability level

- Collect height data from an age-matched sample in the surrounding community.

- Add to the sample until there are exactly 100 males and 100 females, and calculate if the heights differ by more than 1%.

- None of the above.

- All of the above

Programming

Formally, students are required to have a background in at least some programming language. The fact of the matter is that Neuroimaging is computationally intensive; programming is a basic skill for this work. I intend to prepare problem sets that will require programming to solve.

This year, all of our programming will be done using MATLAB, purchase of which is a course requirement. The ASUCLA student store has the licenses for students at an incredibly discounted price of $99. You will not regret owning this.

Many MATLAB tutorials can be found online. Here is a good interactive beginner tutorial from MathWorks. It takes about 2 hours and you must register with MathWorks beforehand, but it covers many aspects of MATLAB in depth (e.g. the workspace, importing data, visualizing data, scripts, functions, & loops).

Another useful option is the demo feature that can be accessed within MATLAB by typing 'demo' at the command prompt.

>> demo

This will open a help window of all available demos. Here are a few demos I recommend (kmc):

- Importing Data from Files

- Using Basic Plotting Functions

- Working with Arrays

- Manipulating Multidimensional Arrays

Mathematics

Can you solve for y or in these equations?

If , what is ?

If not, please let me know, and we will try to remedy things. In the meantime, there are a number of excellent online math tutorials. For matrices, may I suggest:

- Harvey Mudd mathematics online tutorial

- S.O.S. Mathematics

- MATLAB online tutorial

- Programmed text in Linear Algebra - Hefferon

These are all excellent free sources. Please feel free to suggest more.

Functional Neuroanatomy

Stand by. We hope to have a student-run functional neuroanatomy study group.

Concepts and Teaching Plan

We will start looking at a few papers that use images of various kinds to address neuroscientific questions. Here, you should be paying especial attention to how the images are used in a theoretical context. Did the investigator pose the question first then collect the data? What is the role of a posteriori interpretation (reverse inference)? What is assumed about the ground truth of the phenomena exposed by neuroimaging?

After this, we will begin to look at the properties of neurons that might make them visible to our neuroimaging tools. We will consider signaling in neurons, its energetic costs, and the changes in the cellular milieu that are associated. We will begin to consider the optical properties of neurons and their size scale, and the chemical changes that are associated with neuroal activity. As best possible, I will try to incorporate neurogenetics here to consider cell identification and labeling.

At the same time, we will start the practical work in MATLAB. If you are already MATLAB proficient, consider your assignment to include bringing the rest of the class up to speed as quickly as possible so that we can move on. As noted above, MATLAB will be used for our quantitative examples, but it is also a strong standard for image and numerical analysis in the sciences and a relatively easy programming language to use, with a pretty quick startup.

We will start also, on developing the mathematical tools we will need to carry forward. In the digital age, we are dealing always with very large numbers of data points and are forced to deal with large sample sizes (at the very least, a large number of pixels) and we need means of quantitative summary. Our initial steps will be in very basic statistical concepts in anticipation of doing more and deeper work later.

This will be followed by work on analytic math, building to transform theory. Depending on what I find out about your skills level in maths, we may start with some calculus review, or we may have to schedule one-on-one meetings to balance everyone’s background. The goal here is to develop a framework with which to understand what happens to the ground truth data we try to observe as it is filtered through our imaging tools. There are very powerful mathematical tools that can be applied here, particularly the field known as linear systems analysis that considers transfer functions and especially convolution. Each device we build or use can be analyzed, at least in part, within this framework. More importantly, for many classes of systems, the filtering they apply can be inverted – in some cases unblurring and recapturing much of the original data. Deconvolution is the general rubrick under which we will try to analyze this process.

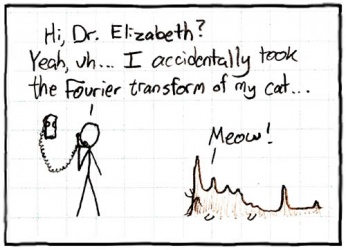

Mathematical transforms are, in general, ways to change the representation of equations into forms that are much easier to solve, or that offer additional insight into the underlying properties. We will look at a few transforms, particularly the LaPlace Transform and the Fourier Transform. The latter is simply a means of expressing and quantifying the frequencies contained in a signal. The maths for these includes a little bit of trigonometry and some basic calculus. By the time we start on these topics, you should make yourself responsible for knowing how to integrate sines and cosines, and reviewing properties of the natural logarithm, e. I will introduce, in class, the concepts and algebra of imaginary numbers, which we will need as well.

The essential results of the Fourier transform find their way into literally every means we have of neuroimaging, the statistical processing of images, concepts of noise and a host of other applications in neuroscience. I truly believe, that although you may find this material difficult, you will be happy about knowing it for the rest of your career as a scientist, making it well worth the effort.

Our first direct application of the analytic tools will be in the analysis and then creation of electrical circuits. We do this for several reasons. Unlike many real-world devices, electrical circuit elements: resistors, batteries, capacitors, inductors and operational amplifiers, act very much like their idealized representations, storing and converting energy in very predictable ways. The tools that have grown to analyze such circuit elements are very mature and quite powerful, making prediction of their behavior straightforward. For this reason, many real-world physics and imaging problems are modeled using electrical circuit elements where we can predict their input-output properties.

The second reason for looking at electrical circuits is that they are present in more or less every lab instrument you are likely to use. Towards the end of the first quarter, we will build, in class, an EEG system based on your understanding of these devices. This will also give us an entrée into the important study of noise, which is present in any experiments. We will look at the many sources of noise in neuroimaging and experiments, and consider ways in which modeling the noise can help us to reduce it. Conversely, we will discuss ways in which we can study the characteristics of the noise in order to better understand either our devices, or the actual features of our images.

We will cover principles of optics, emphasizing the issues of resolution, optical spectrum (frequency ranges), distortion and digital imaging. One way to think about the effects of lenses is as convolution filters (see above) that color the signal. Color, as used here, is a rather broad concept. The process of whitening the signal can be considered a deconvolution. Undoing the lens convolution is a way of removing the blur or distortion produced by a lens. As we go on, we will see this theme of convolution blurring and deconvolution sharpening applied to the many modalities used in modern neuroimaging. Similarly, statistical variance or noise can be reduced or at least better understood in this context, sharpening our statistical inferences and improving detection power.

Our next foray will be into electroencephalography (EEG), which is a simply a measure of the differences in electrical voltage from point to point on the scalp or brain. In addition to looking at the biological basis of the EEG, we will build and test an EEG system in class and we will look at some software approaches to interpreting the EEG both as spatially-resolved (i.e., image) data and as cognitive/physiological signals.

Grading

Your final grades will be determined by the problem sets, the midterm and final and by your class participation. Generally the rubrick is:

- Participation 10%

- Problem Sets 25%

- Midterm 30%

- Final 35%.

As you can see, your participation in the class is of major import, as I believe that everyone learns from other people's questions and comments.

As M284 is a required course for some students continuation in several Ph.D. programs, grading will necessarily be rigorous.

![{\displaystyle \mathbf {Y} =\left[{\begin{array}{cc}2&4\\5&7\end{array}}\right]^{-1}}](https://wikimedia.org/api/rest_v1/media/math/render/svg/171b9836e76d7a57f5f6137bc678ce854ab8be4f)